The Way To Do the Thing is To Do The Thing

To set the stage, it's a "bear market" in our industry, so morale is generally below-average and I've been doing my best to motivate and keep everyone excited.

One point I keep harping on is "the way to do the thing is to do the thing" — and there's a lot of truth to it. After all, a journey of 1,000 miles starts with one step, right?

OK, now: pan camera to our office in Palo Alto. To set the scene, it's about 9:30p on Sunday night and I'm in my office feeling proud of myself for having pulled off a bunch of big migrations that day (including moving a live production database, deleting old docker SHAs, etc), and then I got to the final boss: center-thumbnails-prod

center-thumbnails-prod is legendary in our company because it’s the first cloud storage bucket I made when I first started building center in October 2021. My thinking was, "all good I'll just store NFT media in this GCS bucket and figure out the rest later." Then I thought to myself "if it ever gets to the point that this bucket is too big, that will be a good problem."

And that strategy worked. And it worked so well.

Over time we grew and indexed more and more chains and rendered more media. And in the back of my mind, I knew that one day we’d have to deal with a good problem.

We’d have these meetings where we would discuss our cloud storage bills and how the costs were mounting, and we had spreadsheets on spreadsheets, but really it was clear what we needed to do: move some of the big media to coldline storage.

So instead of spending another day talking about doing the thing, I decided to do the thing.

So I ran a job to export the file sizes in that bucket and load them into BigQuery. From BigQuery, I generated a manifest file with all the files in excess of 25MB (plus a few other rules, so that we would only delete the media we didn't really care about that was taking up space). There were about 3.5M files to delete.

So then I googled how to bulk delete these files, and learned that one way to do it would be to do a storage transfer into another bucket, and in that bucket, set a lifecycle policy where if a file is more than 0 days old, it gets deleted. So basically write the file to /dev/null. So I made a bucket called center_thumbnails_deletion.

And in that fateful form, I made one mistake: I fat-fingered us-east-1 instead of us-west-1 for the bucket region.

There are a couple important differences to note between us-east-1 (South Carolina) and /dev/null:

/dev/nullis a null device file.us-east1is a data center in South Carolina.- South Carolina is not

/dev/null.

I started the storage transfer job, went home feeling like a champion (because the way to do the thing is to do the thing, right?).

I woke up and felt relief because I finally did the thing.

Throughout the day everything was great.

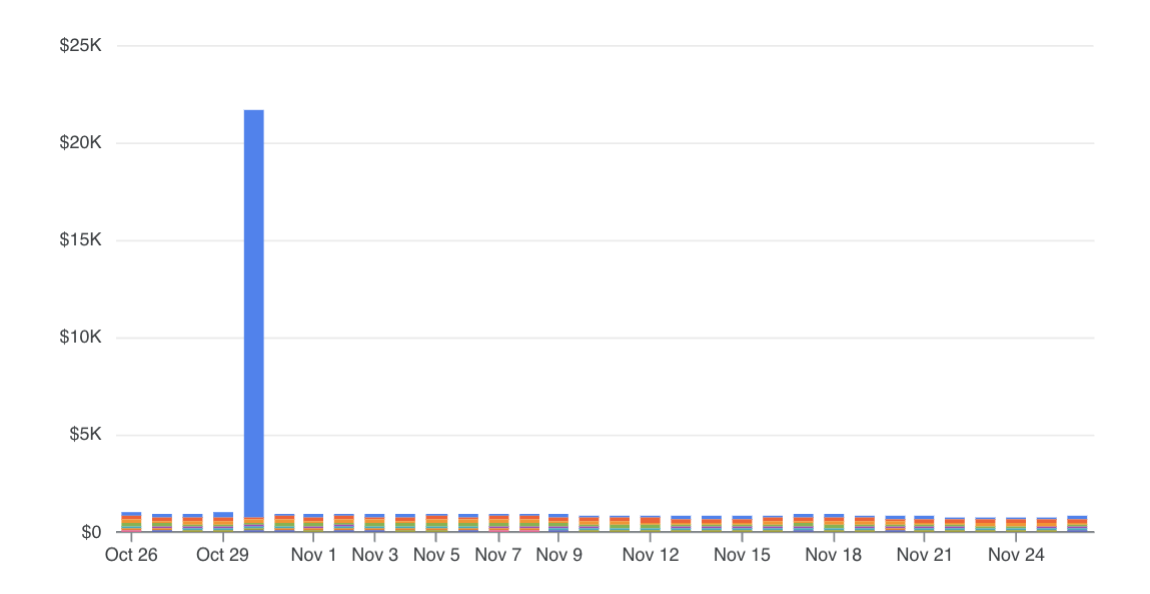

And then I got a ping about a threshold charge on my Ramp statement from Google cloud. Hm, how did we spend a lot of money on cloud so quickly?

And that's when it hit me - this was probably related to my transfer from yesterday.

I didn’t sent the data to /dev/null. I sent it to South Carolina.

There’s more to the story about how we reached out to Google and asked them to credit us, and how moving the data back cost a lot of money etc etc etc. But I did want to share a couple of really important points:

- Google Cloud (and other cloud providers) are incredibly powerful tools.

- With great power, comes great responsibility.

- Take responsibility for your actions and own up to your mistakes.

For a little while I thought about asking for help offsetting the cost, but that would be like asking for a handout: Google Cloud didn't do anything wrong.

Sure, it would have been nice to get a Microsoft Clippy pop up and say "hey, it looks like you're about to spend $20,000 moving files across the country, you sure about that?"

But that's a "nice-to-have." Google Cloud Storage is designed to store files in the cloud, and it does that phenomenally well.

I spent a couple of days feeling stupid about having done this, but most of those feelings went away after a few days.

But there is one feeling that stuck with me: I still believe, with the deepest and utmost conviction: that the way to do the thing is to do the thing.

Also, we are hiring: https://jobs.ashbyhq.com/center